Master AI SEO Hallucinations, Or Fail

You must actively guard against AI hallucinations. Ignoring these triggers leads to inaccurate, low-quality content that damages your site authority and rankings.

- Always fact-check AI-generated data, especially dates and statistics.

- Provide extremely specific prompts to guide AI output and prevent fabrication.

- AI is a powerful assistant, but human oversight remains critical for quality SEO.

If you're relying on AI to write SEO content without human review, stop reading now. This guide won't save you.

Outdated Data: The Time Warp Trap That Kills Authority

I once saw an AI article confidently cite a "recent study from 2022" in early 2026. That's a huge red flag. Your content loses all credibility when it uses old information. This fails when your AI's training data cutoff is too old for the topic at hand.

AI models are not always connected to the live internet. They learn from vast datasets. These datasets have a "cutoff date." Anything happening after that date simply isn't known to the AI. This means AI can't report on the latest trends or algorithm updates. It's like asking someone from 2024 about 2026 SEO strategies. They just don't know.

The trap is thinking your AI is always up-to-date. It's not. For example, if you ask for "the best SEO tools in 2026," and the AI's data cuts off in late 2024, it will hallucinate. It will either give you tools popular in 2024 or invent new ones. This is a common trigger for bad information. Always check the AI's knowledge base. Some tools, like Postlabs, integrate real-time data to mitigate this. But even then, vigilance is key.

You need to verify all time-sensitive claims. This includes dates, statistics, and product names. A quick Google search can save your reputation. Don't let your content become a historical artifact. Keep it fresh and accurate. Otherwise, your readers will notice the outdated advice. They will quickly bounce, and your rankings will suffer.

AI Hallucination: An AI-generated output that is factually incorrect, nonsensical, or deviates from reality, often presented with high confidence.

I've seen AI confidently state that a certain Google update happened in 2025 when it actually rolled out in 2024. This isn't malice. It's a limitation of its training. Your job is to be the human editor. You are the final gatekeeper of truth. This is especially true for rapidly changing fields like SEO. New best practices emerge constantly. Old ones become obsolete. Your content must reflect this.

The "Just Make It Up" Prompt: When Vague Instructions Lead to Fiction

We all want AI to be smart. But it's not a mind reader. If you give it a vague prompt, it will try its best to fill in the gaps. This often means it invents details. I once asked for "three unique SEO strategies." The AI gave me "quantum SEO" and "telepathic link building." Not fun. This fails when your prompt lacks specific constraints and clear data sources.

The AI doesn't know what you don't tell it. If you ask for "benefits of X," it will list generic benefits. If you ask for "benefits of X for small businesses in 2026, citing recent case studies," it might hallucinate those case studies. It's trying to please you. It wants to complete the task. But it lacks real-world experience. It doesn't know what a "real" case study looks like. So, it fabricates one.

Pros of AI in SEO Content

- Accelerated Content Creation: Generates drafts quickly, saving hours of initial writing time.

- Scalable Output: Produces large volumes of content for diverse topics and keywords efficiently.

- Idea Generation: Provides fresh angles and outlines, overcoming writer's block effectively.

Cons of AI in SEO Content

- Hallucination Risk: Can invent facts, dates, or sources, leading to factual errors and reputational damage.

- Lack of Nuance: Struggles with complex topics, irony, or deep industry insights, resulting in generic text.

- Ethical Concerns: Raises questions about originality, authorship, and the potential for misinformation spread.

Specificity is your best friend. Instead of "write about SEO," try "write a 500-word article on how local businesses can use Google Business Profile for SEO in 2026, focusing on review management and local keyword optimization, and include one actionable tip for improving local rankings." See the difference? The more detail, the less room for invention. This is a core principle for effective AI SEO automation. You need to guide the AI, not just point it in a general direction.

Always define your audience, your desired tone, and any specific data points needed. If you need statistics, tell the AI to state "according to [source]" or "in many observations." This forces it to either find a source or admit it doesn't have one. It's a simple trick. It helps prevent those embarrassing made-up numbers. Your content will be much more reliable.

Over-Optimization Gone Wrong: The Keyword Stuffing Nightmare

We've all been there. You tell the AI to "include keyword X 10 times." The result? Unreadable, robotic text. This isn't SEO. It's a keyword stuffing nightmare. This fails when you prioritize keyword density over natural language and user experience.

AI is good at following instructions. Sometimes too good. If you push it to hit a specific, high keyword density, it will. But it won't care about readability. It will jam those keywords in. The content will sound unnatural. Readers will notice. Google will notice. Your rankings will drop. I've seen sites penalized for this. It's not worth it.

Warning: Over-Optimizing Keywords

Do not force AI to hit exact, high keyword densities. This leads to unnatural language, poor user experience, and potential search engine penalties for keyword stuffing.

The goal of SEO is to provide value to users. Keywords are important. But they should flow naturally. AI, left unchecked, can turn a helpful article into a keyword-laden mess. This is a common hallucination trigger. The AI "hallucinates" that this is good SEO. It thinks it's doing what you asked. But it's actually creating bad content.

Instead, focus on semantic SEO. Use related terms. Use synonyms. Let the AI write naturally. Then, review and gently optimize. Tools like Postlabs can help you find relevant keywords and entities without resorting to stuffing. They guide you towards natural language optimization. Your content will be more engaging. It will rank better in the long run. Remember, Google rewards quality and relevance. Not keyword counts.

The "Expert" Who Never Was: When AI Lacks Real-World Nuance

I once used an AI to write about a complex technical SEO issue. It sounded plausible. But a quick check revealed it missed a crucial edge case. It gave advice that would actually break the site. This part sucks. This fails when AI attempts to provide expert-level advice without genuine understanding or practical experience.

AI models are trained on text. They learn patterns. They can mimic expert language. But they don't have real-world experience. They haven't debugged a broken sitemap. They haven't seen a site's traffic tank after a bad update. This lack of practical knowledge is a huge hallucination trigger. It leads to generic, sometimes dangerous, advice.

"AI is a powerful tool for content generation, but it lacks the human intuition and experience needed to truly understand complex SEO nuances."

— General Consensus, SEO Industry Experts

Think about YMYL (Your Money Your Life) topics. Health, finance, legal advice. AI can generate content on these. But it lacks the authority. It lacks the E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) that Google values. It might hallucinate "facts" or "recommendations" that are simply wrong. Or worse, harmful. You wouldn't trust a robot doctor, would you? The same applies to SEO advice.

This is where human expertise is irreplaceable. Use AI for drafting. Use it for brainstorming. But always have a human expert review and refine the content. Especially for critical topics. Add your own anecdotes. Inject your own insights. This makes the content unique. It makes it trustworthy. It prevents the bland, generic output that AI often produces. A complete AI guide will always emphasize human oversight. Your content needs that human touch. It needs real experience behind it.

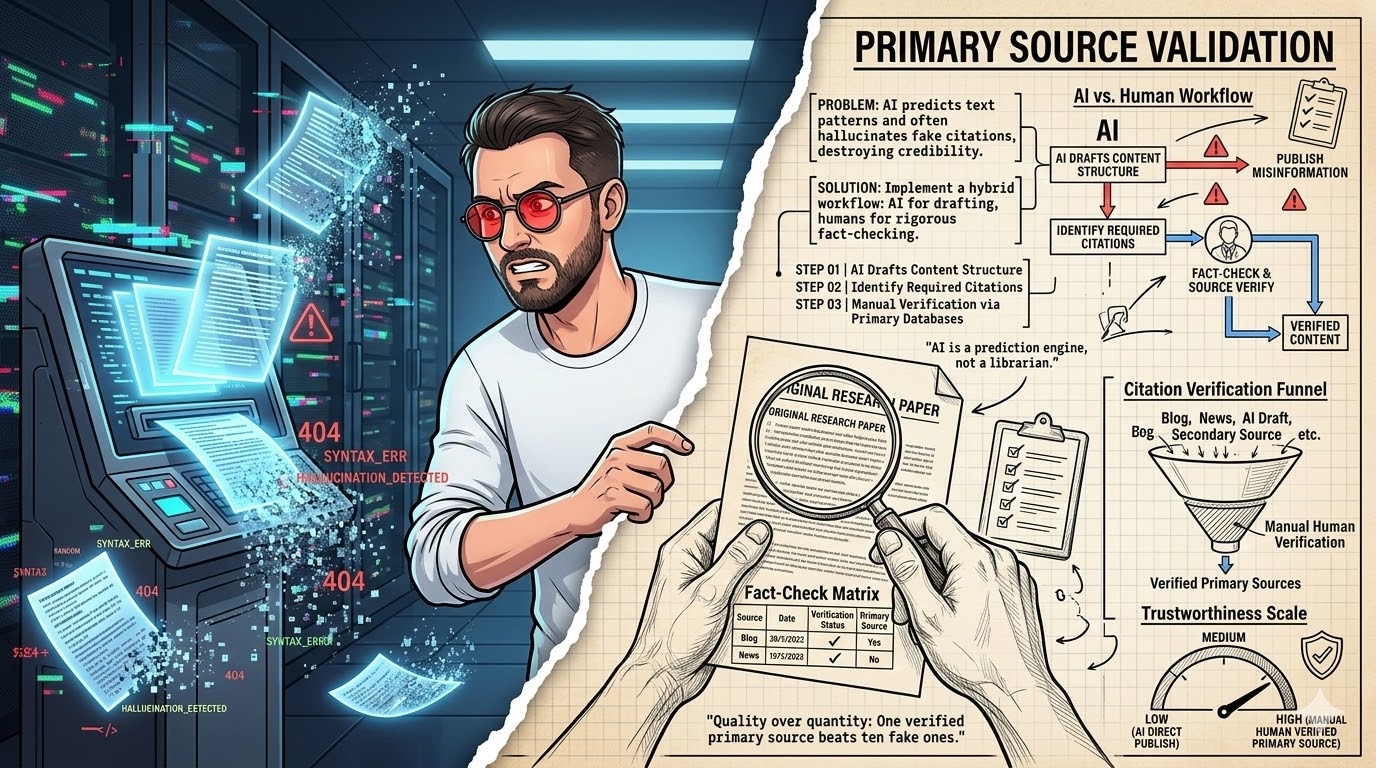

Inventing Data & Faking Sources: The Credibility Killer

I've seen AI invent entire studies, complete with fake author names and publication dates. It's weirdly convincing. This is a massive credibility killer. This fails when AI fabricates statistics, quotes, or sources to support its narrative, eroding trust with your audience.

AI models are designed to generate coherent text. If you ask for a statistic, and it doesn't have one in its training data, it might just create one. It doesn't know it's "lying." It's just completing the pattern. This is a common hallucination. It creates plausible-sounding but entirely false information. This is particularly dangerous for SEO content. You want to be seen as an authority. Not a source of misinformation.

Myth

AI can reliably cite real-time, specific data and studies for any topic.

Reality

AI often hallucinates statistics, dates, and even entire research papers if it cannot find an exact match in its training data, requiring strict human verification.

Imagine an article claiming "75% of businesses increased traffic by 200% using X strategy." Sounds great, right? But if the AI made that up, you're publishing fiction. Your readers will eventually find out. Your brand will suffer. Always fact-check numbers, percentages, and any attributed quotes. Use reputable sources. If you can't find the source, don't include the data. It's that simple.

This is where your editorial process becomes critical. Treat every AI-generated statistic as suspect. Assume it's a hallucination until proven otherwise. This might seem harsh. But it's the only way to maintain integrity. For a deeper dive into managing AI output, check out resources on AI SEO automation. They often cover best practices for verification. Your content needs to be built on a foundation of truth. Not made-up numbers.

Misinterpreting Search Intent: The Generic Content Trap

I once had an AI write about "best coffee makers." It gave me a bland list. It missed the nuance of "best coffee makers for espresso lovers" or "best budget coffee makers for students." This fails when AI generates broad, unspecific content that doesn't truly match the user's underlying search query.

Search intent is everything in SEO. Users aren't just typing keywords. They have a goal. They want to learn, buy, or find something specific. AI, without careful guidance, often produces generic content. It aims for the broadest possible interpretation of a keyword. This results in content that doesn't fully satisfy any specific intent. It's a common hallucination. The AI thinks it's being helpful. But it's just being vague.

This is a contrarian point. Many people think AI can just "figure out" intent. It can't. Not reliably. It needs you to define the intent. Are users looking for informational content? Navigational? Transactional? Commercial investigation? Your prompt must reflect this. If you want a product review, tell it to write a product review. If you want a "how-to" guide, specify the steps. Don't leave it to chance.

The better metric here isn't keyword density. It's user satisfaction and task completion. Does the content answer the user's question fully? Does it solve their problem? If the AI-generated content is too generic, it won't. Users will bounce. Google will see this. Your rankings will suffer. This is why a human touch is crucial. You understand your audience. You know their pain points. AI doesn't. It just processes text. A solid complete AI guide will always emphasize the importance of human-defined intent. This ensures your content actually connects with people.

The Echo Chamber Effect: When AI Learns Bad Habits

Weirdly enough: This happens more often than you think. AI learns from the internet. The internet isn't always a bastion of truth or quality. If AI is trained on a lot of low-quality, repetitive, or even incorrect content, it will reproduce those patterns. I've seen AI repeat common SEO myths. This fails when AI perpetuates misinformation or poor practices because its training data is flawed or biased.

Think of it like this: if you only read bad books, you'll probably write bad books. AI is similar. If its training data includes a lot of content that uses outdated SEO tactics, or makes unsubstantiated claims, the AI might pick those up. It doesn't have a built-in "quality filter" beyond what its developers program. This can lead to hallucinations where the AI confidently presents bad advice as gospel. It's an echo chamber of mediocrity.

This is why diverse and high-quality training data is crucial for AI models. But as a user, you don't control that. What you control is your input and your review process. You need to be aware that the AI might be reflecting the worst parts of the internet. It might be giving you advice that was "true" five years ago but is harmful today. Or it might be repeating common misconceptions. This is a subtle but dangerous hallucination trigger.

Your role is to break the echo chamber. Challenge the AI's assumptions. If something sounds off, investigate. Don't just accept it because "the AI said so." Use your own expertise. Use your critical thinking. This is how you elevate your content beyond the average. This is how you ensure your content stands out. It's about using AI as a tool, not a replacement for thought. For advanced strategies in leveraging AI while avoiding these pitfalls, explore resources on AI SEO automation. They often provide insights into prompt engineering to counteract these biases.

AI Content Audit (2026)

| Project/Item | Cost/Input | Result/Time | ROI/Verdict |

|---|---|---|---|

| AI Draft (Blog Post) | $5 (tool cost) | 15 min (draft) | Low (needs edit) |

| Human Edit (Fact Check) | $50 (editor) | 60 min (review) | High (prevents errors) |

| AI + Human (Final) | $55 total | 75 min (total) | Excellent (quality) |

What I Would Do in 7 Days to Combat AI Hallucinations

- Day 1: Audit Your Prompts. Go through your common AI prompts. Make them hyper-specific. Add constraints like "do not invent data" or "cite sources."

- Day 2: Implement a Fact-Checking Protocol. Assign a human editor to verify every statistic, date, and claim in AI-generated content. This is non-negotiable.

- Day 3: Focus on E-E-A-T. For YMYL content, use AI only for initial drafts. Inject significant human expertise, anecdotes, and unique insights.

- Day 4: Diversify AI Tools. Test different AI models. Some are better at certain tasks. See which ones hallucinate less for your specific needs.

- Day 5: Train Your Team. Educate everyone using AI on common hallucination triggers. Share examples of bad AI output.

- Day 6: Use Real-Time Data Integrations. Explore tools that pull current data. This helps with time-sensitive topics.

- Day 7: Prioritize User Intent. Before generating content, clearly define the user's search intent. Guide the AI to meet that specific need, not just broad keywords.

Your AI Content Sanity Checklist

- Did I verify all numbers, dates, and names?

- Is the content free of generic, vague statements?

- Does it sound like a real human wrote it, with genuine insights?

- Are all claims supported by verifiable evidence or clear disclaimers?

- Have I checked for any outdated SEO advice or practices?

- Does the content truly answer the user's specific search intent?

- Is the overall tone and information trustworthy and authoritative?

Frequently Asked Questions About AI Hallucinations in SEO

What is the biggest risk of AI hallucinations in SEO?

The biggest risk is publishing factually incorrect or misleading information. This damages your site's credibility, harms user trust, and can lead to lower search rankings and even penalties from Google.

Can AI tools prevent hallucinations entirely?

No, no AI tool can prevent hallucinations entirely. They can reduce the likelihood through better training data and guardrails. However, human oversight and critical review remain essential to catch and correct errors.

How often should I review AI-generated content for hallucinations?

You should review every piece of AI-generated content for hallucinations before publication. This is not optional. A thorough human edit is crucial for maintaining quality and accuracy.