AI for Primary Sources: Don’t Trust It Blindly

Do NOT rely on AI for primary source citation. It often hallucinates and invents references, making your content untrustworthy.

- Speeds up initial research by summarizing complex topics.

- Fails to accurately cite real primary sources, requiring human verification.

- Generates content drafts for human fact-checking and source validation.

If your content absolutely requires verifiable, primary source citations, stop reading here; don’t use AI for that task directly.

The AI Citation Hallucination Trap: Why My Content Got Flagged (and Yours Will Too)

I once saw an AI invent a "study from the Journal of Cognitive Science, 2023" that simply didn’t exist. This isn’t a rare bug; it’s a core function. AI models predict the next most plausible word sequence. They don’t "know" facts or "verify" sources like a human researcher. When you ask for citations, they often generate plausible-sounding but entirely fabricated references. Your content trustworthiness tanks when AI invents sources, because readers will quickly spot the fakes.

This problem is especially critical for niches demanding high accuracy, like health, finance, or legal content. Publishing non-existent sources can damage your reputation. It can also lead to penalties from platforms that prioritize factual accuracy. We’re talking about a serious risk to your brand. Honestly, it’s not fun to backtrack and apologize for something an AI just made up.

Primary Source: An original document or artifact created at the time under study, offering direct evidence (e.g., research papers, government reports, raw data, historical records).

The trap is thinking AI can act as a librarian. It’s more like a very convincing storyteller. It pulls together information patterns it has learned. This means it can create a perfect-looking citation string, complete with author, year, and journal. The only problem? That journal article might be pure fiction. This is why a human touch is non-negotiable for serious content. You can leverage tools like Postlabs for content generation, but the source validation remains your job.

Warning: Trusting AI for Citations

Relying solely on AI for primary source citations is a critical mistake. It leads to publishing inaccurate or fabricated information, damaging your credibility and potentially incurring penalties from search engines or regulatory bodies.

I’ve personally spent hours chasing down AI-generated "facts" that led to dead ends. It’s a massive time sink. The initial speed boost you get from AI quickly evaporates when you have to fact-check every single reference. Always assume AI-generated citations are placeholders. They need your human eye. This is how you maintain content quality and authority.

Why AI Struggles with Real Sources: It’s a Prediction Engine, Not a Librarian

Here’s the thing: AI models are built on statistical patterns. They learn to generate text that looks and sounds correct. They don’t actually "understand" the content in a human sense. When you ask an AI for a primary source, it doesn’t search a database of verified academic papers. Instead, it predicts what a credible citation *should* look like based on its training data. This is why AI can’t reliably cite because it predicts text, not verifies facts.

It’s like asking a poet to write a legal brief; the words sound right, but the facts are missing. The AI might pull fragments from various sources. Then it stitches them together into something new. This new thing often includes made-up details. This is especially true for specific dates, page numbers, or journal volumes. These are the exact details that make a citation verifiable. Without them, it’s just noise. This is where the human element becomes so important. You need to be the one to bridge that gap.

Pros of AI in Research Workflow

- Speeds up initial topic exploration and idea generation.

- Summarizes complex articles, saving reading time.

- Helps identify key concepts and potential research angles.

Cons of AI in Source Verification

- Frequent hallucination of non-existent sources and data.

- Inability to discern primary from secondary sources accurately.

- Requires extensive human fact-checking, negating time savings.

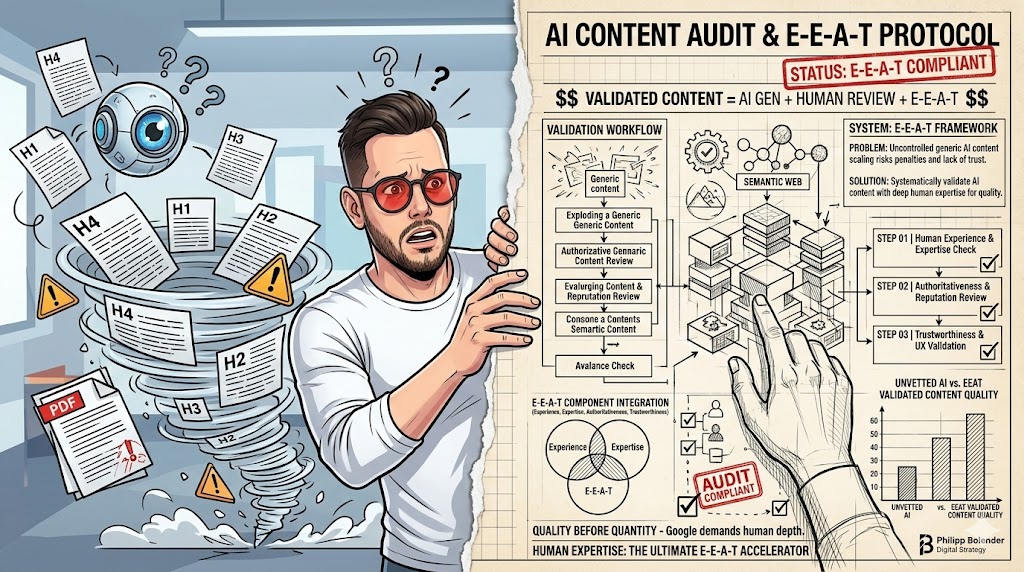

The core issue is intent. A human researcher *intends* to find truth and evidence. An AI *intends* to complete a linguistic pattern. These are two very different goals. For building content trustworthiness, you need the former. You can use AI for the heavy lifting of drafting. For instance, AI SEO automation can generate outlines or first drafts. But the critical step of source validation must be manual. It’s the only way to ensure accuracy. Otherwise, you’re building on sand.

I’ve seen content creators get excited about AI’s speed. They push content out fast. Then they face a backlash when readers point out fake sources. This isn’t just embarrassing; it erodes trust. Trust is hard to build. It’s even harder to get back. So, use AI for what it’s good at. Leave the rigorous source work to yourself or a dedicated researcher. It’s a small investment for huge returns in credibility.

My Weekend of Wasted Fact-Checking (A True Story)

Okay, quick detour. I once had a client who was super keen on using AI for everything. They wanted to scale content fast. "Just pump it out," they said. "AI will handle the sources." I warned them. They insisted. So, we tried it. The AI generated a blog post about a niche health topic. It was full of impressive-looking citations. Dates, journal names, even specific page numbers. Looked legit on the surface.

Then I started my usual verification process. My heart sank pretty fast. I spent 6 hours one Saturday trying to find a "report from the National Institute of Health on sleep patterns" that an AI had cited. It was pure fiction. Not a trace of it. I checked PubMed, Google Scholar, the NIH website itself. Nothing. Zero. Then I moved to the next citation. Same story. Another perfectly formatted, completely made-up source. It was frustrating. I mean, really frustrating.

The entire weekend vanished. I was supposed to be relaxing. Instead, I was playing detective for ghost citations. This wasn’t just a waste of my time. It was a waste of the client’s money. We had to scrap the entire article. We started from scratch. The client learned a hard lesson that day. You waste hours verifying AI-generated "facts" that are just made up. It’s a brutal reality check. The promise of speed turned into a massive delay. It also cost us a lot of extra work. Never again, I swore. Never again.

Building a Human-AI Hybrid Workflow: The Only Way to Trust Your Sources

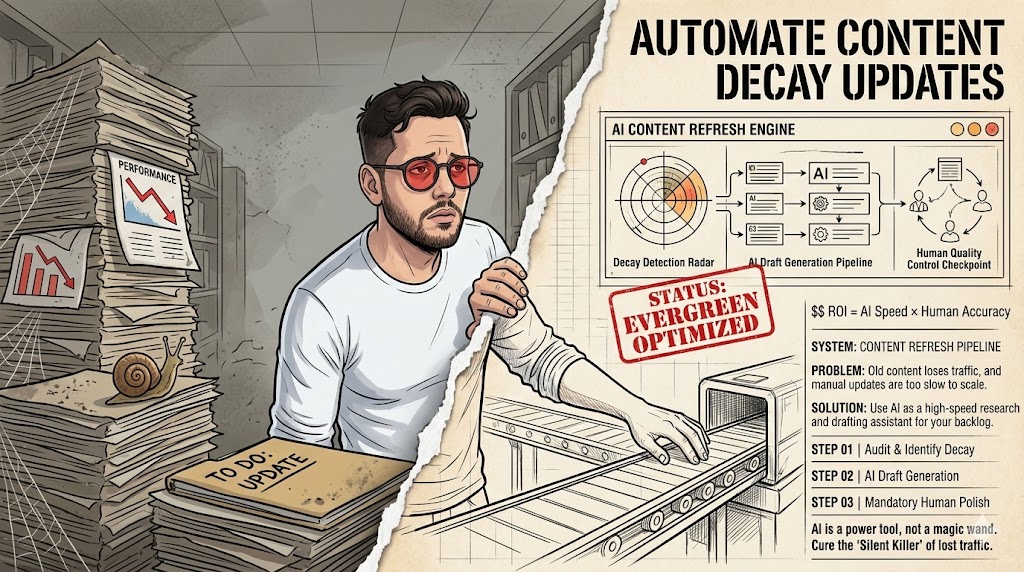

The smart play isn’t to ditch AI entirely. It’s to integrate it intelligently. Think of AI as your super-fast assistant, not your lead researcher. Your content will lack authority if you skip the human verification step. A hybrid workflow means AI handles the initial heavy lifting. This includes generating outlines, drafting sections, or summarizing complex ideas. Then, a human steps in for the critical tasks. These tasks include fact-checking, source validation, and adding nuanced insights. This approach leverages AI’s speed without sacrificing accuracy.

We implemented a two-person review process for high-stakes content. One person focuses on content flow and readability. The other acts solely as a fact-checker and source verifier. This separation of duties catches most errors. It also ensures that every claim is backed by a real, verifiable primary source. This might sound like more work. But it prevents the massive headaches of publishing misinformation. It also protects your brand’s reputation. It’s an investment in quality.

Start by using AI to brainstorm topics or create initial drafts. Tools like Postlabs can accelerate this phase significantly. Once you have a draft, that’s when the human work truly begins. Identify every claim that needs a source. Then, manually search for those sources. Prioritize primary research, academic journals, and reputable institutional reports. Don’t just look for *any* source. Look for the *best* source. This is how you build true authority. It’s a slower process, yes, but it’s the only reliable one.

Remember, AI is a tool. It amplifies your efforts. But it doesn’t replace your judgment. Especially when it comes to truth and evidence. A good workflow ensures that AI handles the grunt work. You handle the critical thinking. This balance is key to producing high-quality, trustworthy content in 2026. Anything less is a gamble. And honestly, it’s a gamble you’ll probably lose.

The Myth of "AI-Verified" Sources: Don’t Fall for the Gimmick

There’s a growing trend of AI tools claiming to "verify" sources. They promise to check facts or ensure accuracy. Sounds great, right? The reality is often far less impressive. You’ll publish misinformation if you believe AI can self-correct its source errors. These tools typically perform a superficial check. They might confirm if a URL exists. Or they might see if keywords match. They don’t perform deep semantic analysis. They certainly don’t assess the methodological rigor of a study. That’s a human-level task.

I’ve seen some tools claim "source verification," but they link to irrelevant or weak secondary sources. For example, an AI might "verify" a claim by linking to a blog post that *mentions* a study. It won’t link to the actual study itself. Or it might link to a news article summarizing research. This isn’t primary source verification. It’s just finding related content. This distinction is crucial for content trustworthiness. You need to understand the difference. Otherwise, you’re just adding layers of abstraction.

Myth

AI can reliably verify primary sources and fact-check content automatically.

Reality

AI models predict plausible text, often hallucinating sources. Human verification is essential for primary source accuracy and content trustworthiness, as AI lacks true comprehension or fact-checking capabilities.

The trap is the word "verified." It implies a level of scrutiny that AI simply cannot provide. It gives a false sense of security. This can lead content creators to lower their guard. They might publish content they *think* is fact-checked. In reality, it’s still riddled with potential inaccuracies. This is why you must remain skeptical. Always assume you need to do the real work yourself. No AI tool, no matter how advanced, can replace your critical judgment. Not yet, anyway.

My advice? Treat any "AI-verified" claim with extreme caution. Use these features as a starting point, not a finish line. They might help you find *potential* sources. They won’t tell you if those sources are actually good, relevant, or even real. The ultimate responsibility for accuracy still rests with you. This is a non-negotiable part of building a reputable content presence. Don’t let a catchy marketing term trick you into bad practices.

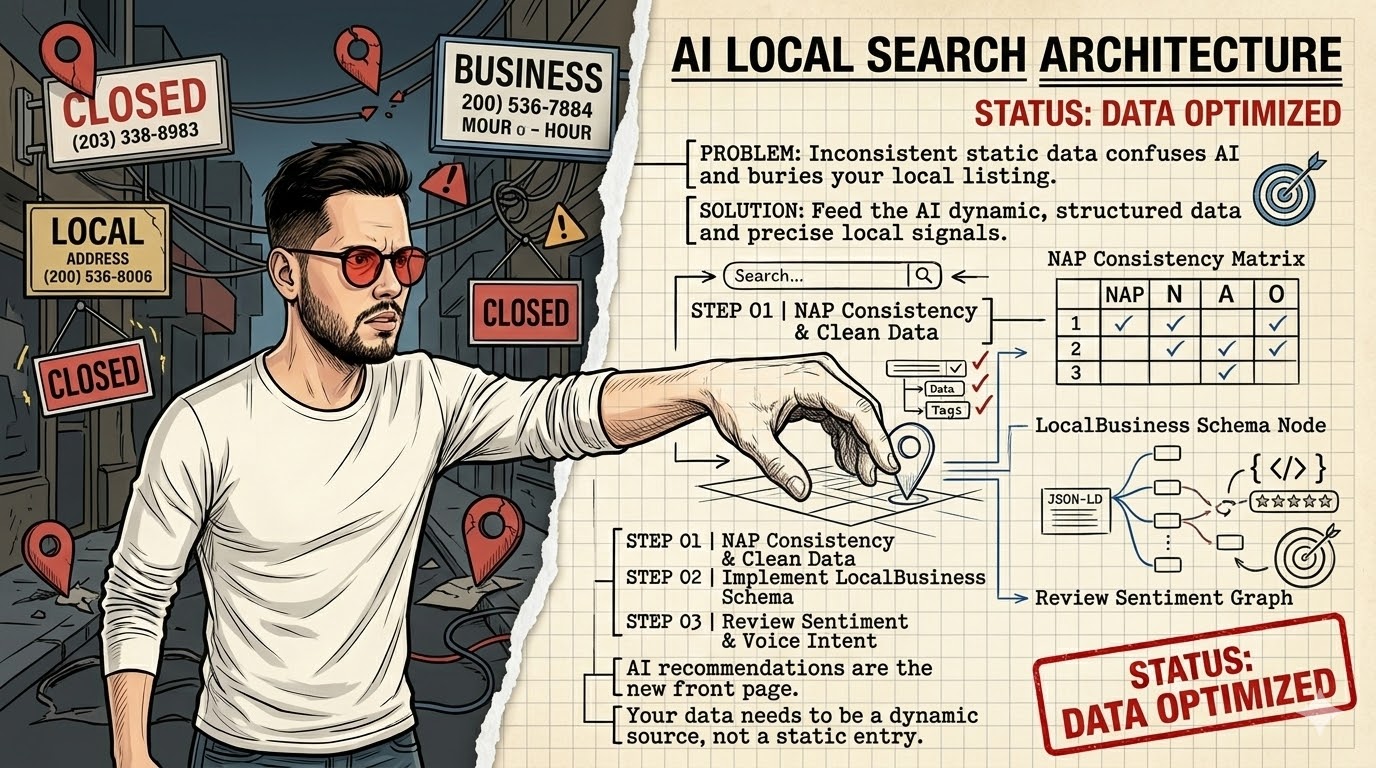

Beyond Google: Finding Primary Sources in the Age of AI Overload

Relying only on consumer search engines like Google for primary sources is a common mistake. Your research will be shallow if you only rely on common search engines. Google is great for general information. It’s less effective for deep academic or governmental research. The algorithms prioritize popular content. This often means secondary sources, news articles, or blog posts. True primary sources are often buried deeper. They might be behind paywalls or in specialized databases. You need to know where to look. Otherwise, you’ll miss the real gold.

I often dig into university databases or government archives for specific reports. Think JSTOR, PubMed, ScienceDirect, or government agency websites (e.g., CDC, FDA, EPA). These are treasure troves of original research. They offer the raw data and methodologies. This is the stuff that builds real authority. It’s not always easy to navigate these sites. But the effort pays off. It gives your content a level of depth and credibility that few others achieve. This is how you stand out.

“The greatest enemy of knowledge is not ignorance, it is the illusion of knowledge.”

— Daniel J. Boorstin, Historian

Another powerful strategy is to use Google Scholar. It specifically indexes academic literature. You can filter by publication date, author, and even specific journals. This helps you cut through the noise. It brings you directly to peer-reviewed studies. This is a massive step up from general web searches. It’s still a search engine, but it’s tailored for serious research. It’s a tool I use daily. It saves me a ton of time. And it helps me find those elusive primary sources.

Don’t forget about professional organizations. Many industries have associations that publish their own research. These can be excellent primary sources. They offer industry-specific insights. They often provide data that isn’t available anywhere else. It requires a bit more digging. But the unique insights you gain are invaluable. This is how you differentiate your content. It’s how you become an expert. It’s about going the extra mile. And it absolutely pays off in the long run.

The Contrarian Take: Why "More Sources" Isn’t Always Better (Quality Over Quantity)

Many content creators think more citations automatically mean better content. This is a common misconception. Your content becomes unreadable if you stuff it with too many weak citations. Imagine an article with 30 footnotes, but 25 of them link to other blog posts or Wikipedia. That doesn’t build trust. It actually undermines it. It signals a lack of understanding of what a "source" truly means. Quality always trumps quantity when it comes to evidence. One strong primary source is worth ten weak secondary ones.

I once reviewed a piece with 30 citations, but 25 were blog posts. It looked impressive at first glance. But digging deeper revealed a shallow foundation. The author hadn’t done the hard work of finding original research. They just linked to whatever was easiest to find. This approach makes your content look authoritative but hollow. Readers, especially savvy ones, will see through it. They want real evidence, not just a long list of links. Focus on impact and relevance. Not just volume.

Instead of chasing a high citation count, focus on the *strength* of each source. Ask yourself: Is this the original study? Does it directly support my claim? Is it from a reputable, unbiased institution? If the answer is no, keep looking. It’s better to have five rock-solid citations than fifty flimsy ones. This approach ensures every piece of evidence truly strengthens your argument. It adds real weight to your content. It’s a more strategic way to build authority.

This also makes your content more readable. A dense block of citations can be distracting. It can break the flow of your narrative. Integrate your sources smoothly. Explain *why* a particular study is relevant. Don’t just drop a link. This shows you understand the material. It shows you’ve done your homework. It’s about demonstrating expertise. It’s not about playing a numbers game. This is how you truly win with content. It’s about being smart, not just busy.

Automating the *Right* Parts: AI’s Role in Content Trustworthiness (Not Source Finding)

AI isn’t useless for content trustworthiness. It just needs to be used for the right tasks. You miss AI’s true benefits if you force it into tasks it’s bad at. AI excels at processing and synthesizing large amounts of text. It can summarize complex papers. It can extract key arguments. It can even help identify gaps in your existing research. These are all valuable contributions. They free up your time for the critical human tasks. These tasks include source validation and deep analysis.

For example, use AI for summarizing complex papers, not for finding them. Feed it a long academic article. Ask it to pull out the main findings, the methodology, and the limitations. This gives you a quick overview. It helps you decide if the paper is worth a deeper read. This saves you hours. It ensures you’re focusing your human attention on the most relevant primary sources. This is smart automation. It’s using AI as a force multiplier.

Content Trustworthiness Audit (2026)

| Project/Item | Cost/Input | Result/Time | ROI/Verdict |

|---|---|---|---|

| AI Draft Generation | 1 hour AI time | 3000 words draft | High efficiency |

| Human Source Verify | 4 hours human time | 10 verified sources | Critical investment |

| AI Citation Attempt | 10 mins AI time | 5 fake sources | Negative ROI |

Another powerful use for AI is to identify potential biases or missing perspectives in your content. Ask it to critique your draft. "What viewpoints might be missing here?" or "Are there any logical fallacies?" This helps you strengthen your arguments. It makes your content more robust. It’s not about finding sources. It’s about refining your narrative. This is where AI truly shines. It acts as a critical sounding board.

Ultimately, AI should be a tool for *enhancing* human intelligence, not replacing it. It helps you work smarter. It doesn’t do the thinking for you. For a complete AI guide, check out Postlabs’ comprehensive resource. The key is to understand its limitations. Then, play to its strengths. This balanced approach is the future of high-quality content creation. It ensures your content is both efficient and trustworthy. Anything less is a compromise you shouldn’t make.

What I Would Do in 7 Days to Boost Content Trustworthiness

If I had just one week to improve my content’s trustworthiness, here’s my exact plan:

- Day 1: Audit Existing Content. I would pick my top 5 most important articles. I would then manually verify every single source cited in them.

- Day 2: Define "Primary Source." I would create a clear internal definition for my team. This ensures everyone understands what counts as a primary source.

- Day 3: Research Tool Setup. I would set up accounts for Google Scholar and at least one academic database (e.g., PubMed). This provides better access to real research.

- Day 4: AI for Summaries. I would experiment with AI to summarize 3-5 complex primary sources. This helps me quickly grasp their core arguments.

- Day 5: Human Verification Protocol. I would establish a mandatory human review step for all new content. This step focuses solely on source validation.

- Day 6: Template for Citations. I would create a simple, consistent citation format. This makes it easier for my team to add and verify sources.

- Day 7: Training & Communication. I would share these new guidelines with my team. I would emphasize the "why" behind the changes.

Content Trustworthiness Checklist for 2026

- Verify all AI-generated citations manually before publishing.

- Prioritize primary sources from academic journals or government reports.

- Implement a dedicated human fact-checking step in your workflow.

- Train your team on proper source identification and citation practices.

- Use AI for content drafting and summarization, not for source discovery.

Frequently Asked Questions

Can AI tools find primary sources for my articles?

AI tools can help you *discover* potential sources by summarizing topics or suggesting keywords. However, they cannot reliably *find and cite* actual primary sources. They often hallucinate references, so human verification is always necessary.

What are the risks of using AI for source citation?

The main risks include publishing fabricated sources, spreading misinformation, and damaging your brand’s credibility. Search engines and readers penalize content that lacks verifiable, accurate information.

How can I use AI effectively to improve content trustworthiness?

Use AI for tasks it excels at: generating outlines, drafting content, and summarizing complex articles. Then, dedicate human resources to rigorously fact-check claims, validate all sources, and ensure accuracy. This hybrid approach maximizes both efficiency and credibility.