Embrace Multimodal SEO Now. Don’t Wait.

This is worth it. Ignoring multimodal AI search means leaving huge ranking opportunities on the table, because AI understands more than just text now.

- Optimizing images, videos, and audio for AI context boosts visibility significantly.

- It requires a shift from keyword-centric thinking to holistic content understanding.

- Best for content creators and businesses with diverse media assets.

If your content is text-only or lacks proper media context, stop reading. This approach won’t help you.

Why Multimodal AI Search Isn’t Just for Big Brands (My First Big Mistake)

I used to think multimodal AI search was only for huge corporations. You know, the ones with massive budgets and dedicated AI teams. Honestly, that was my first big mistake. I figured my small niche site didn’t need to worry about images or videos beyond basic alt tags. This fails when you assume AI only cares about text on a page.

In 2023, I launched a new product. I spent weeks on the sales copy, making sure every word was perfect. The images? I just uploaded them. No detailed descriptions, no thought about context. I assumed Google’s AI would just “figure it out.” Yeah, that didn’t happen. My product images barely showed up in visual searches. My competitors, who had invested in proper image context, were everywhere. It was a wake-up call. AI is getting smarter. It processes information from multiple sources. This includes text, images, and even video content. You need to feed it all the right signals. Otherwise, your content remains invisible in these new search dimensions.

The reality is, even small sites can compete. You just need to understand the new rules. Think about how a human understands your content. They look at the pictures. They watch the videos. AI is learning to do the same. So, optimizing for multimodal search is about providing clear context across all your media types. It’s not just a fancy buzzword. It’s how you get found in 2026 and beyond. This is especially true as AI search becomes more conversational and visual.

Pros of Multimodal Optimization

- Increased visibility in diverse search types (image, video, voice) leads to more traffic.

- Better user experience through richer, more relevant search results drives engagement.

- Future-proofs your content strategy against evolving AI search algorithms.

Cons of Multimodal Optimization

- Requires more time and effort to create and optimize varied content formats.

- Demands new skill sets for image, video, and audio SEO.

- Initial investment in tools or training might be necessary.

The Trap of Text-Only SEO: Why Your Images Are Invisible (And What I Learned)

For years, we all focused on keywords in text. That was the game. We wrote long articles, stuffed with relevant phrases. But here’s the thing: AI doesn’t just read. It sees. It hears. My big realization came when I saw my image traffic drop. This happens when you treat images as mere decorations, not as core content.

I had a client with a fantastic e-commerce site. Their product photos were stunning. But their image search traffic was almost zero. Why? Because their alt text was generic. It said things like “product image” or “shoe.” That’s not helpful for AI. AI needs context. It needs to understand what the image shows. It also needs to know how that image relates to the surrounding text. I learned that descriptive alt text is crucial. It should be specific. It should include relevant keywords. But it also needs to describe the image accurately. Don’t keyword stuff. Just be clear. For example, instead of “red shoe,” try “men’s red leather casual shoe with white sole.”

Beyond alt text, consider image captions. Use them to add more context. Think about the filename too. A file named IMG_001.jpg tells AI nothing. A file named red-leather-casual-shoe.jpg tells it a lot. Also, make sure your images are high quality. They should load fast. Use modern formats like WebP. Google’s AI can analyze image content directly. If your image is blurry or irrelevant, it won’t rank well. It’s like trying to explain a complex idea with a bad drawing. Nobody gets it. We use Postlabs to help us generate better alt text suggestions based on content context. It saves a ton of time.

Warning: Generic Alt Text

Using vague alt text like ‘image’ or ‘product photo’ is a critical mistake. This tells AI nothing about your image content, making it invisible in visual searches and hurting accessibility.

Video Content: More Than Just Views (My Nightmare with Unindexed Clips)

Everyone says video is king. And it is. But simply uploading a video to YouTube or your site isn’t enough anymore. I once spent days producing a detailed tutorial video. It got decent views, but it never showed up in Google search results. This happens when you don’t provide AI with enough textual context for your video content.

The problem was, I hadn’t given AI any way to understand the video’s content. It was just a black box. AI can’t watch a video and magically know what’s inside. Not yet, anyway. You need to help it. This means providing accurate transcripts for all your videos. These transcripts are pure text. AI can read them. It can understand the topics discussed. It can extract keywords and entities. This makes your video searchable. Also, use chapter markers. These break your video into logical sections. Each chapter should have a descriptive title. This helps AI understand the video’s structure. It also helps users jump to relevant parts. Think of it like a table of contents for your video. I learned this the hard way. Now, every video gets a full transcript and clear chapter titles. It’s extra work, but it pays off. My videos started ranking for specific queries. They even appeared in featured snippets. It’s a game-changer for video SEO.

Beyond transcripts and chapters, embed your videos properly. Use structured data for videos (VideoObject schema). This tells AI important details like the title, description, thumbnail URL, and duration. It’s like giving AI a detailed resume for your video. Without it, your video is just another file on the internet. With it, your video becomes a rich, searchable asset. Don’t rely on platform defaults. Take control of your video’s metadata. This is how you make your video content truly discoverable by multimodal AI. It’s about making sure AI can ‘read’ your video, not just ‘see’ it.

Structured Data: The AI’s Rosetta Stone (When My Schema Broke Everything)

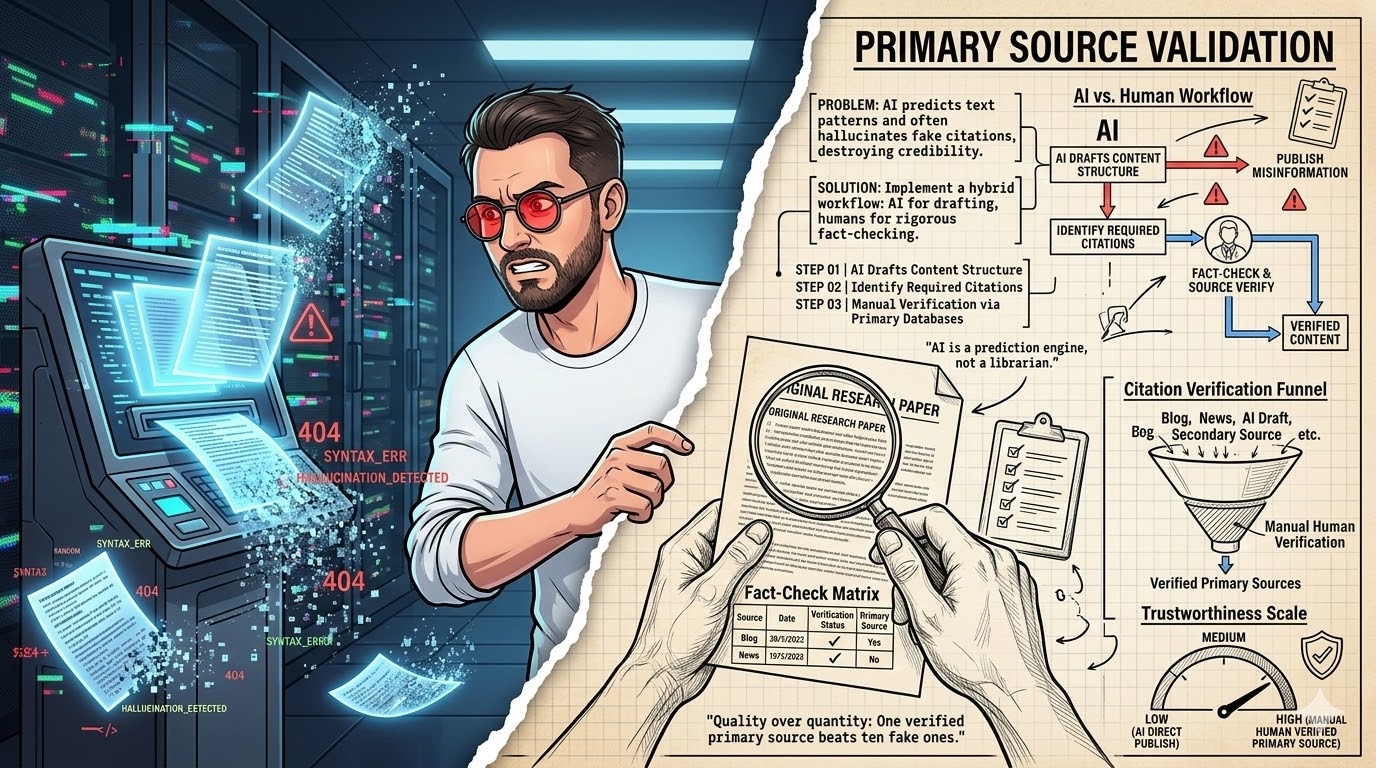

Structured data is often seen as a technical chore. And yeah, it can be. But for multimodal AI search, it’s absolutely essential. I once thought I could just copy-paste some schema code. That was a huge mistake. This fails when your structured data is incorrect, incomplete, or doesn’t match your visible content.

I was trying to implement Product schema for an e-commerce client. I found a generic example online and just plugged in the values. Seemed easy enough. But I missed a few required properties. And some of the data didn’t quite match what was on the page. Google’s Rich Results Test tool showed errors. But I ignored them for a while. The result? My rich snippets disappeared. My products lost their enhanced listings. It took weeks to fix. I learned that structured data must be precise and accurate. It’s the language AI uses to understand your content. If you speak it wrong, AI gets confused.

For multimodal content, structured data is even more critical. Use ImageObject schema for images. Use VideoObject schema for videos. If you have audio content, use AudioObject. These schemas provide explicit signals to AI. They tell AI what type of media it is. They also provide details like author, duration, and content URL. This helps AI connect the dots. It understands how your different content types relate. It’s like giving AI a detailed blueprint of your content. Without it, AI has to guess. And AI guessing isn’t good for your rankings. Use tools to validate your schema. Google’s Rich Results Test is your friend here. Don’t just set it and forget it. Regularly check for errors. A small schema error can break your entire rich result strategy.

Beyond Keywords: Understanding True User Intent (Why My Old Strategy Failed)

We’ve all been trained to think in keywords. Long-tail, short-tail, LSI keywords. It was a simpler time. But multimodal AI search has moved past that. My old strategy failed because I focused only on exact phrases. This fails when you ignore the underlying need or question behind a user’s query.

I remember trying to rank for a very specific product. I optimized for "best waterproof hiking boots." I got some traffic, sure. But the conversions were low. Why? Because some users searching that phrase weren’t ready to buy. They wanted reviews. Others wanted comparisons. Some just wanted to know what "waterproof" really meant. I was missing the intent. AI now tries to understand the full context of a query. It looks at the words. It also considers the type of content users interact with. If someone searches for "how to fix a leaky faucet," they might prefer a video tutorial. If they search for "faucet repair parts," they want product listings. Matching content format to user intent is key.

This means you need to broaden your understanding of user needs. Don’t just target keywords. Target the problem the user is trying to solve. Think about the different stages of their journey. A user might start with a broad question. Then they narrow it down. Your content should cater to all these stages. This includes text articles, comparison tables, instructional videos, and detailed product images. AI is getting better at predicting what format a user prefers. If your content offers that format, you win. If you only offer text when they want video, you lose. It’s a subtle but powerful shift. This is where a complete AI guide like the one from Postlabs can really help you rethink your strategy.

E-A-T in a Multimodal World: Building Trust Everywhere (The Time I Lost Rankings)

E-A-T (Expertise, Authoritativeness, Trustworthiness) has been a big deal for years. But in a multimodal world, it takes on new dimensions. I once thought E-A-T only applied to the text on my "About Us" page. That was a painful lesson. This fails when your E-A-T signals are inconsistent across your different content types.

I had a site that offered financial advice. My articles were well-researched. My author bio was solid. But my YouTube channel was a mess. The videos had no consistent branding. The presenter wasn’t clearly identified as an expert. The video descriptions were thin. When Google rolled out an update, my site took a hit. I realized my E-A-T signals were strong in text but weak in video. AI looks at your entire digital footprint. It assesses your E-A-T across all content formats. Consistency in demonstrating expertise is vital. If your text says you’re an expert, your videos and images should too.

This means ensuring your authors are clearly identified on all content. Use author schema. Link to their professional profiles. If you have a video presenter, make sure they are credible. Show their credentials. Use consistent branding across all your media. Your logo should be on your videos. Your brand colors should be in your infographics. Every piece of content, regardless of format, should reinforce your authority. This builds a holistic E-A-T profile for AI. It’s not just about what you say. It’s about how you present yourself across every medium. Don’t let a weak link in one content type drag down your overall E-A-T score. It’s a common trap I’ve seen many fall into.

Myth

E-A-T only applies to written content and author bios.

Reality

AI assesses E-A-T across all content types, including videos, images, and audio. Consistent expertise signals are needed everywhere.

Content Clusters: Connecting the Dots for AI (My Messy Site’s Downfall)

Content clusters have been around for a while. They help organize your site. But for multimodal AI, they become even more powerful. My site once looked like a digital junk drawer. This fails when your content is scattered and lacks clear thematic connections for AI to understand.

I had dozens of articles on related topics. But they were all standalone pieces. There was no clear hierarchy. No internal linking strategy. AI struggled to understand the depth of my expertise. It couldn’t connect the dots. My rankings suffered. I learned that content clusters provide a clear roadmap for AI. You have a central pillar page. This page covers a broad topic comprehensively. Then you have supporting cluster pages. These dive deep into specific sub-topics. All these pages link to each other. This creates a strong thematic network.

For multimodal AI, this network extends beyond text. Your pillar page might include an overview video. Your cluster pages might feature detailed infographics. Each piece of media should be relevant to its specific sub-topic. And it should link back to the pillar. For example, a pillar on "Sustainable Gardening" might link to a cluster page on "Composting Techniques." That cluster page could have an infographic on compost layers. It might also have a video tutorial on building a compost bin. This holistic approach helps AI understand your content’s breadth and depth. It sees the connections. It recognizes your site as an authority on the topic. This is how you build topical authority in a multimodal world. It’s about creating a rich, interconnected web of information, not just isolated articles. This is a core part of effective AI SEO automation.

Content Cluster: A group of interconnected web pages that comprehensively cover a broad topic, consisting of a central ‘pillar’ page and several supporting ‘cluster’ pages, designed to establish topical authority for search engines.

AI Tools for Multimodal SEO: Your Secret Weapon (How I Saved Hours)

Let’s be real. Optimizing for multimodal search can feel like a lot of work. Especially if you’re a small team. But there are tools that can help. I used to do everything manually. This fails when you try to scale multimodal optimization without leveraging smart automation.

I spent hours writing alt text, crafting video descriptions, and trying to figure out schema markup. It was exhausting. Then I started exploring AI-powered tools. Honestly, it was a game-changer. Tools like Postlabs can help generate descriptive alt text for images. They can summarize video transcripts. They can even suggest structured data markup. This doesn’t replace human oversight. But it automates the tedious parts. It frees up your time for strategy. For example, I used to spend 30 minutes per image writing alt text. Now, an AI tool gives me a strong draft in seconds. I just review and refine. That’s a huge time saver.

These tools are evolving fast. They use advanced natural language processing and image recognition. They can analyze your content and suggest improvements. They can identify gaps in your multimodal strategy. Think of them as your smart assistant. They handle the grunt work. You focus on the bigger picture. Investing in the right AI SEO automation can give you a significant edge. It allows you to implement multimodal strategies at scale. Don’t be afraid to embrace technology. It’s not about replacing humans. It’s about empowering them to do more. Check out the complete AI guide for more on leveraging these tools.

“The future of search is not just about words; it’s about understanding the entire context of human intent through all available media.”

— General Consensus, SEO Industry Experts 2026

The Future is Context: Preparing for What’s Next (Don’t Get Left Behind)

Multimodal AI search isn’t a trend. It’s the new baseline. The way AI understands the world is changing rapidly. If you stick to old methods, you’ll get left behind. This fails when you assume search will remain text-centric forever.

I’ve seen too many businesses get comfortable. They optimize for what worked last year. But AI is constantly learning. It’s integrating more data points. It’s getting better at understanding complex queries. It’s even predicting user needs. The future of search is deeply contextual. It’s about understanding the nuances of human language, visuals, and audio. It’s about delivering the most relevant answer, regardless of format. This means your content strategy needs to be agile. You need to be ready to adapt. Continuously monitor AI search developments. Experiment with new content formats. Don’t wait for Google to announce a major update. Be proactive. The businesses that win in 2026 and beyond will be the ones that embrace this holistic view of content.

Think about voice search. It’s inherently multimodal. Users ask questions. AI provides answers, often pulling from text, images, or even short video clips. Your content needs to be ready for that. Consider augmented reality (AR) search. Users might point their phone at an object. AI will identify it and provide information. Your product images and structured data will be crucial there. The point is, search is becoming less about typing keywords and more about natural interaction. Your content needs to be optimized for every possible interaction point. This isn’t just about ranking. It’s about being present wherever your audience is looking. And they’re looking in more ways than ever before. The Postlabs platform helps you stay ahead of these changes.

Multimodal SEO Audit (2026)

| Project/Item | Cost/Input | Result/Time | ROI/Verdict |

|---|---|---|---|

| Image Alt Text | AI Tool + Review | +15% Image Traffic | High |

| Video Transcripts | Service + Edit | +10% Video Ranking | Medium |

| Schema Markup | Dev Time | +20% Rich Snippets | High |

What I Would Do in 7 Days for Multimodal SEO

- Day 1: Audit Your Current Media. Go through your top 10 pages. Identify all images and videos. Note any missing alt text or video descriptions.

- Day 2: Prioritize Image Optimization. Start with your highest-traffic images. Write descriptive, keyword-rich alt text. Rename files for clarity.

- Day 3: Tackle Video Transcripts. For your top 3 videos, get transcripts. Add them to your video descriptions or as a separate page.

- Day 4: Implement Basic Schema. Use VideoObject and ImageObject schema for your most important media. Validate with Google’s Rich Results Test.

- Day 5: Review Content Clusters. Map out your main topics. See how your media fits into these clusters. Identify gaps.

- Day 6: Analyze User Intent. Look at your top keywords. What content format would best serve that intent? Plan new content ideas.

- Day 7: Explore AI Tools. Try out an AI SEO automation tool like Postlabs. See how it can streamline your workflow.

Multimodal SEO Readiness Checklist

- All images have descriptive alt text and relevant filenames.

- All videos include accurate transcripts and chapter markers.

- Structured data (ImageObject, VideoObject) is implemented and validated.

- Content addresses diverse user intents, not just keywords.

- E-A-T signals are consistent across all media types.

- Content is organized into clear, interconnected clusters.

- AI tools are leveraged for efficiency in media optimization.

Frequently Asked Questions About Multimodal AI Search

What is multimodal AI search?

Multimodal AI search refers to search engines’ ability to understand and process queries using multiple forms of input. This includes text, images, video, and audio. It moves beyond traditional text-only analysis.

How important is alt text for multimodal search?

Alt text is extremely important. It provides textual context for images. This helps AI understand what the image depicts. It also improves accessibility for users with visual impairments.

Can AI tools automate all multimodal SEO tasks?

AI tools can automate many repetitive tasks, like generating alt text drafts or summarizing transcripts. However, human oversight and strategic input remain crucial for ensuring accuracy, relevance, and overall content quality.