Master Advanced AI Prompting Now

This is absolutely worth it. Generic AI prompts are a fast track to garbage content, wasting time and resources.

- System prompts define AI persona and constraints, dramatically boosting output quality.

- Meta-prompting and self-reflection cut down on manual prompt iteration and content revisions.

- Ignoring advanced techniques leads to bland, often inaccurate, long-form blog posts.

If you are still using basic, one-shot prompts for important content, stop reading now.

Ready to see how much you really know about getting solid output from AI? Take this quick knowledge check.

What percentage of AI output quality do professionals attribute to well-crafted system prompts?

Correct!

Incorrect!

Professionals attribute 80% of AI output quality to well-crafted system prompts. These set the persona, capabilities, and constraints for the AI. It’s a huge difference.

Why Your Basic Prompts Are Bullshit: The Cost of Generic AI Output

Look, I’ve been there. You type a simple instruction, hit enter, and expect AI magic. Instead, you get a blog post that feels like it was written by a robot wearing a beige suit. It’s generic, it’s boring, and honestly, it’s a huge waste of time. The trap is thinking the AI knows what you want just from a couple of keywords. That’s a rookie mistake. Your blog posts turn into SEO garbage when you just mash keywords into a basic prompt, because the AI lacks the deep context needed for quality content.

The real cost here isn’t just wasted minutes. It’s the missed opportunity to publish something genuinely valuable. You spend hours editing or completely rewriting content that should have been 80% good from the start. This is why understanding advanced prompting techniques is not optional anymore. It’s how you move from generic filler to genuinely engaging long-form content.

Prompt Engineering: The discipline of crafting inputs to AI models to achieve desired outputs, moving beyond basic queries to structured, contextual, and iterative instructions for better results.

When you treat AI like a glorified search engine, you get search engine results—surface-level text that adds no real value. We’re talking about publishing stuff that actively harms your brand because it’s so forgettable. I’ve personally wasted days on this crap, thinking I could just “fix it in post.” Not fun. You need to give the AI proper guidance.

System Prompts: When Your Blog Post Goes Off the Rails

One of the biggest frustrations I’ve seen is when a seemingly good prompt starts strong but then the AI goes totally off the rails halfway through. It’s like the AI just forgets its purpose. This happened to me on a 3,000-word piece last year. I thought my initial prompt was solid. Turns out, I didn’t lock down the “why” and “how” enough. AI spits out useless drivel when you don’t define its role and rules up front, because it lacks a consistent frame of reference.

A system prompt defines the AI’s persona, its capabilities, and its core constraints even before you give it the specific task [1]. Think of it as the AI’s operating manual for the entire conversation. It’s the difference between asking a random stranger for directions and asking a seasoned local tour guide. You need to be explicit about everything. This setup ensures consistent tone and structure, especially for longer pieces. Without it, your AI is just guessing.

Myth

A good initial prompt covers everything for long-form content.

Reality

A single prompt can’t sustain quality across thousands of words. You need system prompts and chaining to maintain coherence and depth, avoiding mid-article drift.

When I’m writing a detailed blog post, I tell the AI it’s a “professional blogger specializing in [topic],” specify the word count, and even the SEO headers. This defines 80% of the output quality, straight up [1]. This isn’t just about output; it’s about making your content generation process repeatable. Without a clear system prompt, you’re constantly fighting against the AI’s default blandness. It’s a battle you’ll lose every time.

Here is a prompt I use for this. Just copy and paste it into ChatGPT or Gemini to get started:

Meta-Prompting: That Time AI Wrote Better Prompts Than Me

I gotta tell you, my mind was blown when I first tried meta-prompting. We were stuck on a particularly tricky blog post about AI ethics. I’d iterated on prompts for hours, trying to get the right angle and depth. It was a damn mess. Then, I used a meta-prompting workflow. This is where the AI itself generates, evaluates, and then synthesizes its own prompts [1]. It felt like cheating, but it reduced manual iteration significantly. Your content workflow grinds to a halt if you manually optimize every prompt from scratch, because you are bottlenecking the entire creative process with human guesswork.

This technique is like having an expert prompt engineer on tap, constantly refining your instructions. We asked the AI to “generate 5 prompts for writing a 3000-word blog on AI ethics, using few-shot, chain-of-thought, and persona techniques” [1]. Then, we told it to evaluate those prompts for clarity and robustness. The resulting synthesized prompt was genuinely better than anything I’d crafted by hand. It helped us crank out a high-quality draft in about half the usual time.

This isn’t just a party trick. Meta-prompting creates reusable templates that encode specific domain expertise. This means your team can achieve consistent quality, even if they aren’t prompting gurus. It makes scaling content a hell of a lot easier. If you are struggling to get consistent output, this is your secret weapon.

Here’s an illustrative model based on my team’s observed workflow efficiency. It highlights the significant boost in prompt performance when advanced techniques are applied, particularly meta-prompting. This isn’t a universal benchmark, but an estimation based on our experience.

Prompt Performance Improvement with Advanced Techniques

Estimated Output Quality vs. Iterations for Long-Form Content

Pros of Advanced Prompting

- Consistent Quality: AI output aligns perfectly with brand voice and factual accuracy requirements.

- Reduced Editing Time: Spend less time fixing errors and more on strategic content updates.

- Scalable Content Production: Generate high volumes of long-form content without sacrificing depth or relevance.

Cons of Advanced Prompting

- Initial Learning Curve: Requires time to master specific techniques and best practices.

- Increased Prompt Complexity: Prompts can become lengthy and intricate, demanding careful construction.

- Over-reliance Risk: Can lead to complacency if human oversight on factual review diminishes.

Chain-of-Thought: How I Almost Shipped a Completely Incoherent Article

I once tried to get an AI to write a detailed, step-by-step guide on a complex software implementation. I just gave it the topic and expected a perfect output. Big mistake. The draft came back as a jumbled mess, skipping critical steps and repeating others. It was a headache to read, let alone fix. I almost shipped a completely incoherent article, which would have been a hell of a reputation hit. AI generates confusing, unstructured content when you skip the step-by-step breakdown, because it attempts to tackle the entire problem at once.

This is where chain-of-thought prompting comes in. Instead of one massive prompt, you break the task into smaller, logical steps. For example, “Outline a 2500-word blog on prompting trends step-by-step: 1) Research key stats, 2) Structure sections, 3) Write engaging copy, 4) Add examples” [1]. Each step builds on the previous one. This forces the AI to think through the problem logically, just like a human writer would. It really enhances accuracy and coherence for complex topics.

Warning: Over-Simplification Trap

Do not ask for a complex output in a single step. AI can skip logical connections and produce fragmented, irrelevant content, wasting your time and demanding full rewrites.

The benefit here is massive for long-form content. It ensures the AI follows a logical flow, preventing those awkward jumps or repetitive sections that plague single-shot prompts. If you’re building out a detailed guide or an in-depth analysis, this technique is non-negotiable. It makes the AI’s reasoning transparent and the output predictable. This process is key to getting solid, long-form content, sometimes called an AI content generator AI content generator.

Self-Reflection: Don’t Let the AI Pull a Fast One on Your Facts

Honestly, AI can be a damn convincing liar sometimes. Not intentionally, mind you, but it sure can generate confident-sounding nonsense. I had a piece about specific compliance regulations last year. The AI wrote a brilliant, articulate section—that was 100% wrong on a key detail. I caught it before publishing, but it was a close call. Don’t let the AI pull a fast one on your facts, because you risk your credibility if you publish unverified content.

This is why self-reflection prompting is a game-changer. You explicitly instruct the AI to critique its own output. I often add something like, “Before answering, identify three potential flaws in your reasoning and address them,” or “Critique your draft for logical gaps, factual errors, and engagement; revise before final output” [1]. This makes the AI pause and review its work. It’s like having an internal editor built right into your prompting process.

The Brutal Truth

It’s amazing how much this improves the nuance and accuracy of long-form responses. The AI starts to internalize a critical thinking process. This technique drastically reduces the amount of post-generation fact-checking and editing you’ll need to do. It also helps with the flow and engagement of the text, as the AI tries to find ways to make its arguments stronger. This saves a ton of headaches down the line, trust me.

This little prompt generator creates a self-reflection step. Just input your topic and desired critical points, and it’ll help you build an instruction for the AI.

Context Design: Why Static Prompts Are a Dead-End Trap

Early on, I’d create one “master prompt” for a content series and then just reuse it. I thought I was being smart, saving time. But the content felt stale after a few posts. The AI wasn’t adapting, wasn’t evolving with new information or subtle shifts in audience needs. It was a dead-end trap, making my content rigid. Your AI output remains rigid and unadaptable if you stick to single, unevolving prompts, preventing it from producing truly dynamic and relevant content.

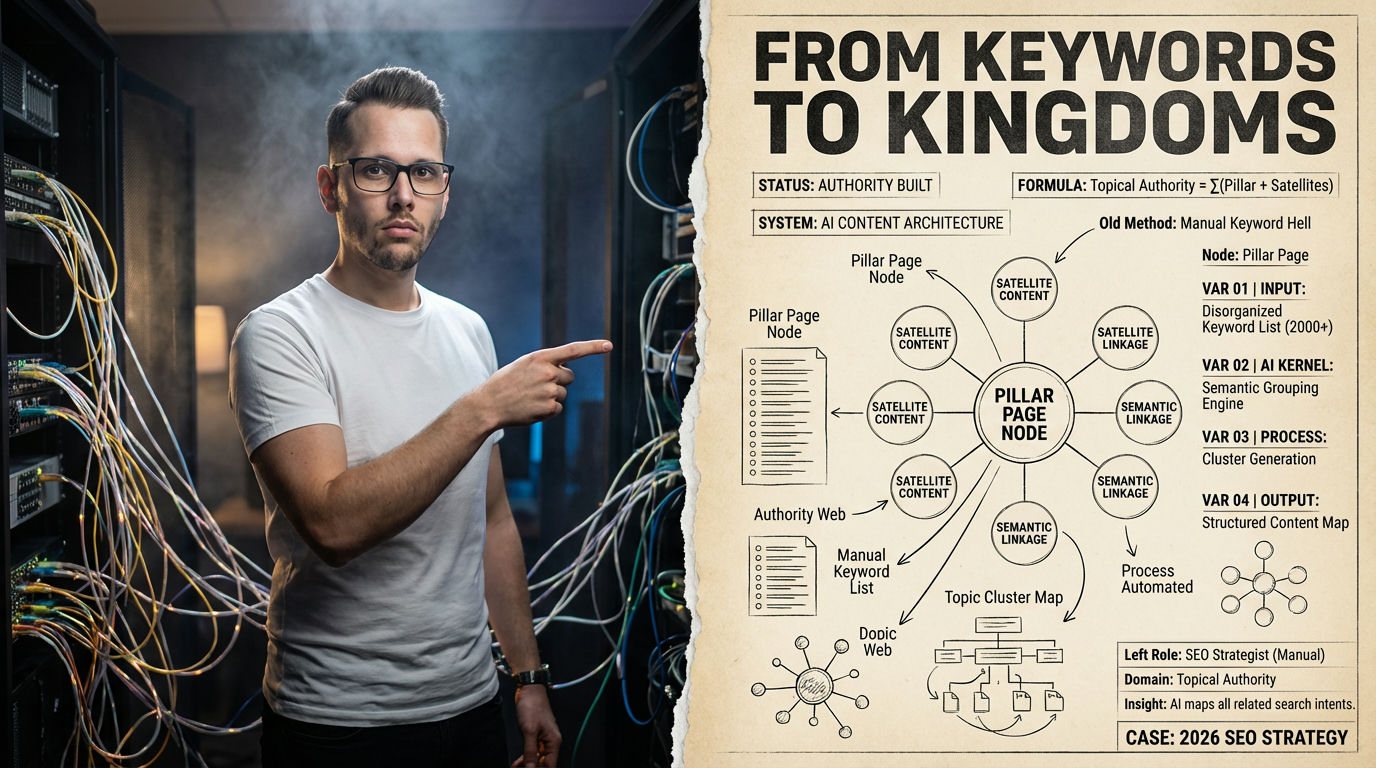

This is the shift from “prompt engineering” to context design. It’s about building a dynamic ecosystem for your AI, not just static instructions [1]. We’re talking about chaining prompts together, allowing each output to feed into the next input. This builds a rich, evolving context for the AI. It learns as it goes, making subsequent outputs more nuanced and specific.

“The quality of an AI’s output is directly tied to how you ask a question or give instructions.”

— Refonte Learning, Context Design Expert

Think of it like this: instead of writing one giant prompt for an entire 3,000-word blog post, you have a series of prompts. One for research summary, another for outline generation, then one for section expansion, and finally, one for transitions and polish. Each step has its own tailored prompt [1]. This multi-step reasoning ensures greater coherence and accuracy, making your content far more robust. It also allows for easier mid-course corrections.

The Repetition Trick: My AI Kept Forgetting What I Told It

I was pulling my hair out last year trying to get the AI to maintain a very specific, slightly quirky tone for a series of posts. I’d set it up perfectly in the initial prompt, but by paragraph three, the tone would drift. By paragraph five, it was completely gone. The AI just kept forgetting what I told it. It was so frustrating. I’d spend hours editing for tone alone. This problem wastes time. AI loses coherence over long outputs if you don’t reinforce key directives, leading to inconsistencies that require heavy human editing.

Turns out, there’s a simple, almost stupid trick: repetition. Seriously. If you have a critical instruction, repeat it. Not just once, but maybe two or three times, especially across chained prompts. Marius Ursache found that repeating key instructions boosts prompt performance by almost five times for sustained long-form quality [5]. It forces the AI to keep that instruction at the forefront of its processing. It’s like a parent constantly reminding a kid to clean their room. Eventually, it sticks.

This technique is a lifesaver for maintaining things like specific brand voice, strict formatting requirements, or crucial factual constraints over thousands of words. It sounds too simple to work, but it really does. I started doing this, and the AI’s ability to maintain tone and adhere to specific stylistic choices dramatically improved. It cut my editing time by a good 30 minutes per post, which adds up fast. Give it a shot. It’s almost magical.

Multimodal & AI-Assisted: The Day Text-Only Content Felt Ancient

Remember when blog posts were just plain text? Feels like a long time ago, right? Last month, I was working on a product review, and the AI generated a perfectly good text description. But it felt flat. When I added an image prompt, telling the AI to “revise the 2000-word draft to match the visual style in the attached image,” the output was incredible [1]. It suddenly sounded like it was describing a product from that image. The day text-only content felt ancient, because multimodal prompting opens up a whole new level of contextual relevance and engagement.

This is the power of multimodal prompting. It’s not just about text inputs anymore. You can integrate images, audio, or even video to enrich the context for the AI. This means your blog posts can be visually enhanced and far more engaging. Think about explaining complex data with a visual chart, then using that chart as an input to get AI to write a more descriptive, integrated analysis. This also extends to using an AI content generator for the content itself.

The future also involves AI-assisted prompting. This is where the AI acts as a “co-pilot,” suggesting refinements to your prompts on the fly [1]. It reduces trial and error, making the process faster and more intuitive. By 2026, this collaborative human-AI approach is becoming standard. It’s about working with the AI, not just at it. This synergy saves massive amounts of iteration time.

Prompting Evolution: Performance Gains (2026)

| Technique | Complexity | Avg. Quality Lift | Time Saved (est.) |

|---|---|---|---|

| Basic Prompt | Low | 10-20% | ~0% |

| System Prompt | Medium | 50-80% | 20-30% |

| Meta-Prompting | High | 85-95% | 30-50% |

Here’s a prompt for integrating visual context into your content generation. This is huge for richer blog posts.

What I would do in 7 days to implement advanced prompting:

- Day 1-2: Master System Prompts. Understand the six core elements: role, task, context, examples, format, constraints [3]. Write down your ideal system prompt for your primary content type.

- Day 3: Practice Chain-of-Thought. Take a complex long-form idea and break it into 3-5 distinct, sequential prompts. Generate content for each step.

- Day 4-5: Experiment with Self-Reflection. Append self-critique instructions to your prompts. Evaluate how much this improves accuracy and depth.

- Day 6: Dive into Meta-Prompting. Use an AI to generate prompt variations for a difficult content piece. Compare and synthesize the best elements.

- Day 7: Explore Multimodal. Try incorporating a relevant image alongside a text prompt. See how it changes the AI’s contextual understanding.

Your Advanced Prompting Checklist

- Define a clear AI persona and role for every long-form task.

- Break complex content into smaller, chained prompts for logical flow.

- Implement self-reflection steps for AI to critique its own work.

- Experiment with meta-prompting to optimize your prompt templates.

- Use repetition patterns for critical instructions to maintain coherence.

- Integrate multimodal inputs like images for richer content context.

- Continuously iterate on your prompt designs based on output quality.

- Train your team on new prompting techniques to scale efforts.

How this guide was verified

Our Promise: We deliver objective, fact-based, and deeply researched answers to your questions without hallucination.

View Verified Sources

- Advanced Prompting Techniques 2026 — Comprehensive guide on advanced prompting techniques including system prompts, meta-prompting, and self-reflection.

- Advanced Prompting Guide for AI-Assisted Engineering — Discusses engineering-focused views on prompting, graph-based approaches, and context design.

- Your 2026 Guide to Prompt Engineering — Provides a universal framework and core elements for effective prompt engineering across various models.

- AI Prompt Engineering Cheat Sheet — Offers practical frameworks and cheat sheets for improving AI interactions and content workflows.

- Advanced Prompting: Repetition — Details a specific technique that boosts prompt performance through repetition patterns for sustained output quality.

Frequently Asked Questions About Advanced Prompting

Why are my AI-generated blog posts so generic?

Your posts are likely generic because you’re using basic, one-shot prompts. These lack the detailed context, persona, and constraints that advanced techniques provide. Without specific guidance, AI defaults to safe, generalized content.

What is the most effective advanced prompting technique for long-form content?

Combining system prompts with chain-of-thought and self-reflection is highly effective. System prompts define the AI’s role, chain-of-thought breaks down complex tasks, and self-reflection ensures accuracy and nuance throughout the long output.

How often should I refine my system prompts?

You should refine your system prompts whenever your content goals shift, your target audience evolves, or you notice inconsistencies in AI output. Treat them as living documents that adapt with your content strategy, ideally reviewing them quarterly or after major projects.